There’s a problem with building skills for AI assistants. The more capable you make them, the more instructions you have to load upfront, and every instruction burns context tokens — even the ones the model never uses. So you end up choosing between a powerful system that chokes on its own documentation, or a simple one that fits in memory but can’t do much.

I didn’t want to make that choice. So I built Reflex.

What it actually is

Reflex is a skill for Claude that works more like a plugin system than a traditional prompt. You say “reflex” followed by what you want — a module name, a chain of modules, or just a natural language description of the work — and a Python router figures out what to load. Only that module’s instructions enter the conversation. Everything else stays on disk.

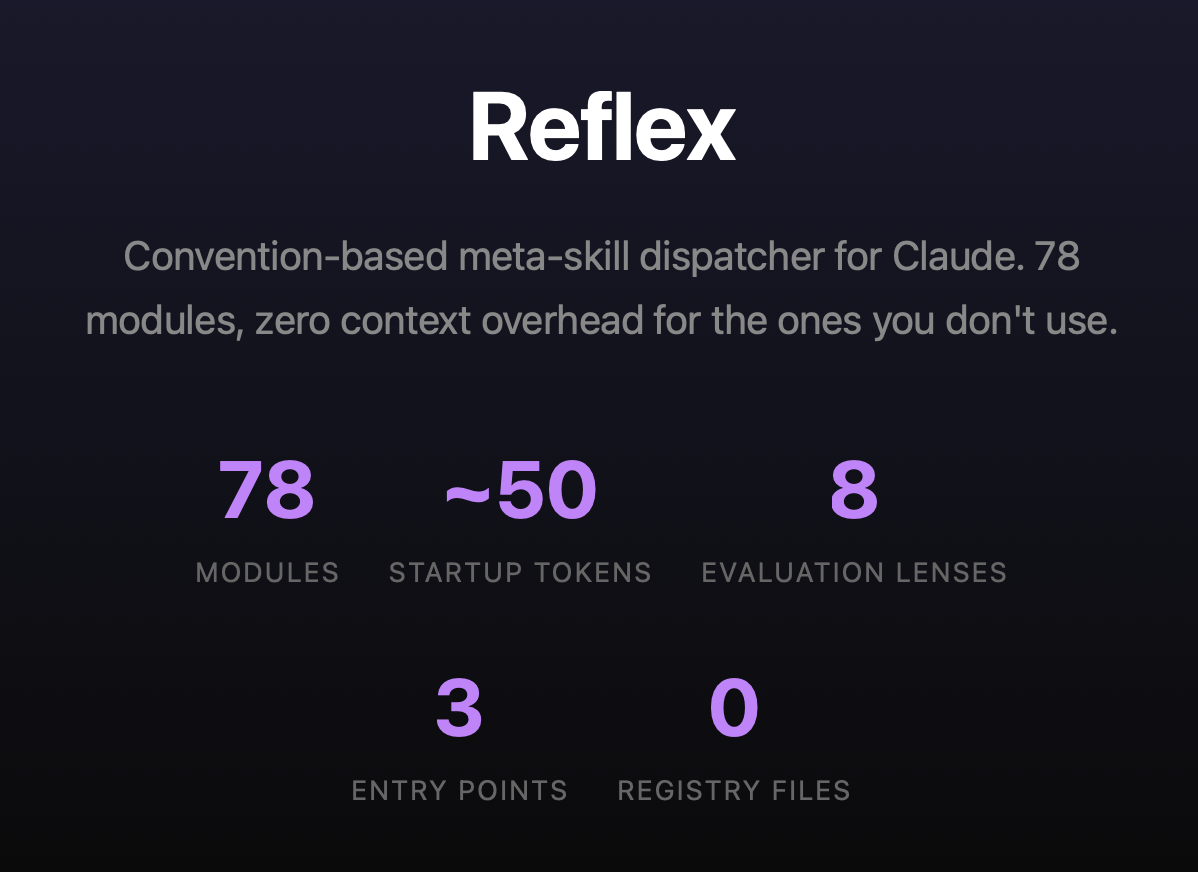

Right now it has 78 modules. The startup cost is about 50 tokens. Whether I add 10 more modules or 100 more, that number doesn’t change. The router handles discovery. The filesystem is the registry.

I didn’t plan to build 78 modules. I started with maybe 12 — web search, competitive analysis, a report writer, a SWOT analyzer. Then I noticed that every time I wanted a new capability, I just created a folder, dropped in a markdown file with instructions, and it worked. No config changes. No code changes to the engine. The convention handled it.

That’s when I realized the architecture was the interesting part, not any individual module.

How the pieces connect

The thing I’m most proud of is the composition model. Modules connect with a + operator. When you write websearch+competitors+report, you’re building a pipeline: research a company, analyze the competitive landscape, write a report. Each step writes structured JSON to a shared workspace on disk. The next step reads it. The workspace is the data bus.

This sounds simple, and it is — but the implications compound. Because every step persists its output, the chain becomes auditable. You can run a debrief module on any chain and it’ll trace which findings survived from research to final document, which were lost, and which appeared from nowhere (that last one matters more than you’d think).

Some modules have built-in dependencies. The go-to-market strategy module, for instance, automatically chains through web search, competitive analysis, positioning, and audience profiling before it even starts writing strategy. You just type reflex gtm-strategy target:"my product" and the system resolves the full pipeline. But if you’ve already run the research in a previous step, the dependencies check the workspace first and skip what’s already there. No redundant work.

The self-improvement loop

This is where it gets interesting. I built a module called perspective that applies an evaluation lens to whatever the previous step produced. The lens doesn’t score the output — it reveals what the output can’t see about itself. Then it produces the revision, not notes about what to fix, but the actual revised deliverable.

There are eight built-in lenses: missed implications, wrong framing, hidden assumptions, strategic avoidance, and so on. The newest one — unsupported confidence — came from a live test where I found that perspective was great at catching what was missing or misframed, but it couldn’t catch claims that appeared from nowhere. An email draft mentioned “users loved it” when no user data existed. That’s not a gap in reasoning. That’s invention. So I built a lens for it.

The real trick is what happens when you chain perspective twice. In email+perspective+perspective, the first pass might catch strategic avoidance — the email played it safe. The second pass operates on the already-corrected version and finds a different problem, like hidden assumptions introduced by the fix itself. Each pass applies a different lens because the upstream context tells it what was already examined. No resolver, no rotation logic. The model just reads what was done and picks a different angle. The system’s intelligence is in the connections.

Evidence certification

The latest addition is a module called certify. It scans every artifact in the workspace and produces a structured assessment: here are the 18 claims in this document, 8 are sourced to specific URLs, 4 are inferences, 3 are assumptions, and here’s what we didn’t check.

Six of the formatter modules know to look for certification data. If it’s there, they embed it as an appendix in the final document. If it’s not, they work exactly the same as before. Zero overhead unless you opt in.

So the chain websearch+gtm-strategy+certify+report produces a Word document with a professional GTM strategy and an evidence appendix that maps every claim to its source. When someone asks “is this grounded in real data?” the answer is in the document itself. You don’t have to trust the prose. You can check.

The persona layer

I had a problem: the system was powerful but the grammar was intimidating. websearch+competitors+positioning target:"fintech" audience:"investors" produces extraordinary results, but if you don’t know the syntax, you get nothing. Meanwhile, simpler tools offer guided conversations that feel approachable even if they’re less capable.

So I built a persona system. You type reflex persona copilot and you get a thinking partner that has the full module registry loaded. You just talk. The copilot recognizes when you need research, when you need a deliverable, when you need stress-testing, and it silently dispatches the right modules.

The interesting architectural decision was keeping personas separate from modules. Modules are bounded — they take input, produce output, and end. That’s what makes them composable. A persona is persistent — it stays active across the whole conversation. If I’d made the copilot a module, it would have been chainable. websearch+copilot would have been valid syntax, and the result would have been incoherent. So personas live in a parallel directory. The module engine never sees them. The filesystem is the type system.

Getting the copilot to actually use the modules instead of Claude’s built-in tools was its own journey. The first version was polite about it — “reach for modules when the user needs real information.” Claude cheerfully ignored that and used its native web search every time. Turns out, when you give a model two ways to do something and one is structurally easier, it’ll take the easy path regardless of what the instructions say.

The fix wasn’t more aggressive instructions. It was making the copilot say out loud, at the start of every conversation, that it would use the module system. A spoken commitment. It sounds almost too simple, but it works — the model is less likely to silently violate a promise it made three messages ago. That, plus making sure the formatter modules actually deliver the final document (so there’s no gap for native tools to fill), closed the loop.

The design philosophy

I keep coming back to five ideas:

Convention over configuration. Adding a module means adding a folder. If a change requires modifying the engine, the design is wrong.

Slow is steady, steady is fast. The module path takes more steps than asking Claude directly. Each step writes evidence to disk. By the time the deliverable ships, every claim traces to a source. The fast path skips those steps and produces work you can’t audit.

Epistemic honesty as architecture. The certify module, the lens concern convention, the unsupported-confidence lens — these are the system’s immune response against its own tendency to sound confident about things it invented.

Composition over comprehension. No single module tries to do everything. When audience profiling improves, every module that depends on it improves automatically.

The user chose this. When someone loads the copilot, they opted into a methodical system. The architecture respects that choice.

If you want to see the architecture in detail, there’s an interactive visualization that walks through the progressive disclosure model, chain composition, evidence pipeline, and persona system. The full repo has everything.